AutoCSS: A Hackathon Retrospective

In 2018, I built a tool to convert hand-drawn wireframes to HTML using OpenCV contour detection. Eight years later, VLMs do this with a single API call. A love letter to scrappy engineering.

The Pitch

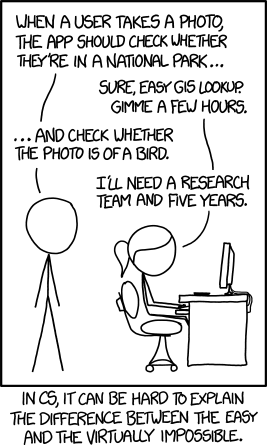

In 2018, at a hackathon somewhere in my early undergrad, I had what felt like a brilliant idea: what if you could sketch a website layout on paper, point a camera at it, and have working HTML pop out the other end?

The comic’s punchline is that identifying whether a photo contains a bird requires a research team and five years. Converting hand-drawn wireframes to code? That was our bird.

But we were undergrads with 48 hours, a laptop, and the unwavering confidence that comes from not knowing what you don’t know.

So we built it anyway.

How It Works

Here’s what we were trying to do:

┌─────────────┐ ┌─────────────┐

│ ┌───────┐ │ │ <div> │

│ │ ███ │ │ │ <div> │

│ ├───┬───┤ │ ───▶ │ ... │

│ │ █ │ █ │ │ │ </div> │

│ └───┴───┘ │ │ </div> │

└─────────────┘ └─────────────┘

hand-drawn HTML output

The key insight: OpenCV already knows how to find nested shapes. We just had to convince it that rectangles-inside-rectangles is the same thing as divs-inside-divs.

The Pipeline

┌──────────┐ ┌──────────┐ ┌──────────┐ ┌──────────┐ ┌──────────┐ │ Input │ │ Cleanup │ │ Edges │ │ Contours │ │ Output │ │ ──────── │ │ ──────── │ │ ──────── │ │ ──────── │ │ ──────── │ │ photo │───▶│threshold │───▶│ canny │───▶│RETR_TREE │───▶│ HTML │ │ of │ │ + blur │ │ edge │ │ hierarchy│ │ DOM │ │ drawing │ │ │ │detection │ │ │ │ tree │ └──────────┘ └──────────┘ └──────────┘ └──────────┘ └──────────┘

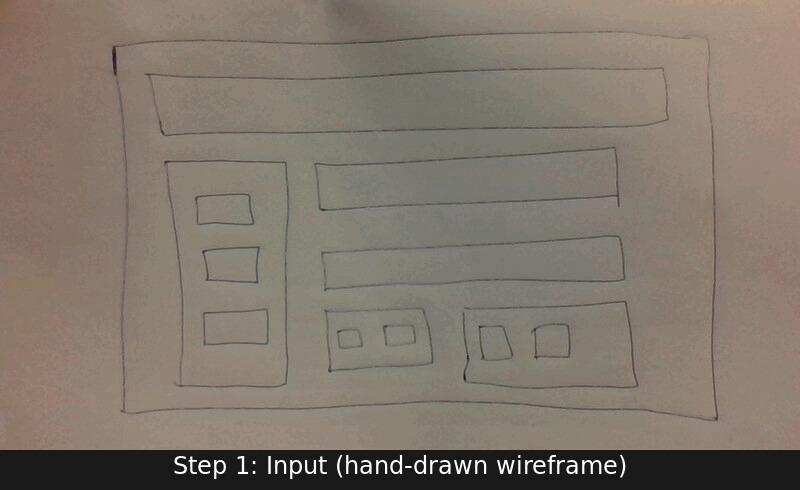

And here’s what that actually looked like:

Each frame shows a real intermediate output from the pipeline:

- Input: The hand-drawn wireframe on paper

- Binarized: Threshold to clean black-on-white

- Edges: Canny edge detection finds the lines

- Contours: OpenCV traces every closed shape (notice the double lines!)

- Output: Clean bounding rectangles, ready for DOM conversion

The magic happens in step 4. Let me show you.

The RETR_TREE Trick

When you call cv2.findContours(), you can choose how to organize the results.

Most tutorials use RETR_EXTERNAL (just outer shapes) or RETR_LIST (flat list).

We used RETR_TREE.

This returns the full parent-child hierarchy of every contour. And here’s the thing: a contour hierarchy is already a DOM tree.

What OpenCV returns: What HTML needs:

Contour 0 (outermost) <div> ← root

├── Contour 1 <div> ← child

│ ├── Contour 3 <div/> ← grandchild

│ └── Contour 4 <div/> ← grandchild

└── Contour 2 <div/> ← child

</div>

Same structure. Same structure.

Different syntax.

The recursive function to walk this tree is tiny:

def build_dom(contour_idx, hierarchy):

"""Walk contour tree, emit nested divs."""

node = hierarchy[0][contour_idx]

child_idx = node[2] # First child

if child_idx == -1:

return "<p>content</p>" # Leaf node

html = ""

while child_idx != -1:

html += f"<div>{build_dom(child_idx, hierarchy)}</div>"

child_idx = hierarchy[0][child_idx][0] # Next sibling

return htmlThat’s it. That’s the whole DOM generation. Ten lines.

The Double Contour Problem

Of course, nothing is that simple. Hand-drawn lines aren’t perfect geometric shapes. Edge detection on thick marker strokes produces… interesting results.

When Canny runs on a hand-drawn line, it finds two edges, one on each side of the ink stroke. This means every rectangle becomes two nested contours:

What you draw: What OpenCV sees:

████████ ┌──────────┐ ← outer edge of ink

█ █ │ ┌──────┐ │ ← inner edge of ink

█ █ ───▶ │ │ │ │

█ █ │ │ │ │

████████ │ └──────┘ │

└──────────┘

One rectangle Two nested contours!

Without fixing this, every <div> would have a useless wrapper div inside it.

The Fix: Skip Single Children

The solution is elegant: if a contour has exactly one child, and that child is roughly the same size, skip the parent and promote the grandchildren:

Before: After:

┌───┐ ┌───┐

│ 0 │ │ 0 │

└─┬─┘ └─┬─┘

│ │

┌─┴─┐ ← single child, ┌───┴────┐

│ 1 │ same size │ │

└─┬─┘ (duplicate!) ┌─┴─┐ ┌─┴─┐

│ │ 2 │ │ 3 │

┌───┴────┐ └───┘ └───┘

│ │

┌─┴─┐ ┌─┴─┐ Grandchildren promoted!

│ 2 │ │ 3 │

└───┘ └───┘

The dup_tree() function walks the tree bottom-up, pruning these ghost nodes.

It’s 15 lines of Python and it turned the output from “unusable mess of

nested wrappers” to “actually correct DOM structure.”

Nested boxes became nested divs. That’s not nothing.

The Twist

To understand why our approach made sense, you need to understand what the world looked like in 2018.

┌─────────────────────────────────────────────────────────────────┐ │ WHAT WAS HOT IN 2018 │ ├─────────────────────────────────────────────────────────────────┤ │ │ │ GANs ████████████████████████████ CycleGAN, pix2pix │ │ CNNs ███████████████████████ "ImageNet is solved" │ │ RNNs ██████████████ Seq2seq, attention │ │ RL █████████ AlphaGo hype │ │ │ │ Transformers ██ (2017 paper, still ignored) │ │ │ └─────────────────────────────────────────────────────────────────┘

The transformer paper, “Attention Is All You Need,” had been published the year before, on June 12, 2017. By our 2018 hackathon, nobody around us thought it was about to rewrite this whole problem class.

At a hackathon in 2018, you used OpenCV:

- Ran on any laptop (no GPU required)

- Excellent Python bindings

- Thousands of tutorials

- Actually worked in 48 hours

Deep learning meant training CNNs for days on a GPU cluster. Not hackathon-friendly.

What Others Were Building

We weren’t alone in trying sketch-to-code:

| Project | Approach | Result |

|---|---|---|

| Pix2Code (2017) | CNN + LSTM | 77% accuracy on 16 synthetic tokens |

| Airbnb (2017) | Component classifier | Only worked with their 150 pre-defined components |

| Microsoft Sketch2Code (2018) | Azure CV + rules | Supported 5 element types |

Everyone had the same idea. Nobody cracked it.

Then, March 2023

The GPT-4 Developer Livestream. Greg Brockman photographs a hand-drawn sketch on a napkin. Seconds later: a working website.

The exact project we built in 2018. But working perfectly, instantly, with no edge detection, no RETR_TREE, no “draw with thick marker in good lighting.”

The Difference

AutoCSS (2018): GPT-4V (2024):

┌──────────┐ ┌──────────┐

│ pixels │ │ pixels │

└────┬─────┘ └────┬─────┘

│ │

▼ │

┌──────────┐ │

│ edges │ │

└────┬─────┘ │

│ │

▼ ▼

┌──────────┐ ┌──────────┐

│ contours │ │"I see a │

└────┬─────┘ │ search │

│ │ bar and │

▼ │ a nav │

┌──────────┐ │ menu..." │

│hierarchy │ └────┬─────┘

└────┬─────┘ │

│ ▼

▼ ┌──────────┐

┌──────────┐ │ working │

│nested │ │ React + │

│divs │ │ Tailwind │

└──────────┘ └──────────┘

Geometry Understanding

Where we saw “rectangle at (100,200) with width 300,” a VLM sees “search bar in header, probably e-commerce, needs placeholder text.”

The Stanford Design2Code benchmark (2024): GPT-4V generated pages that could replace originals 49% of the time on 484 real websites.

Not 77% on 16 synthetic tokens. Near-majority replacement on arbitrary sites.

The Modern Toolkit

- tldraw Make Real: Draw → HTML/Tailwind. ~$0.04 per generation.

- screenshot-to-code: 71K+ GitHub stars. Any mockup → React/Vue/HTML.

- v0.dev: 6M+ developers. Production components from sketches.

One API call. No contour detection required.

Coda

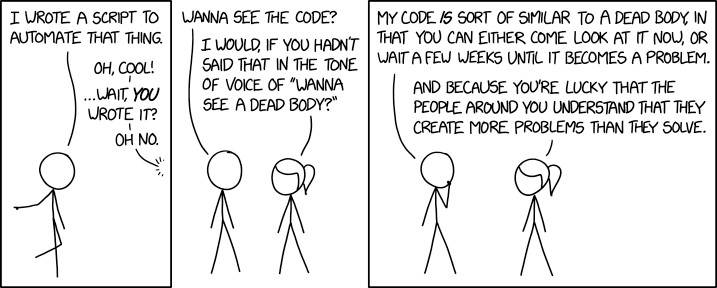

I recently opened the AutoCSS repository for the first time in eight years.

The code is exactly what you’d expect:

- Jupyter notebooks as the main codebase

- Variables named

heirarchy(typo preserved for posterity) - Commented-out print statements everywhere

- Hardcoded paths to

lay6.png

It’s a mess. It’s also a time capsule.

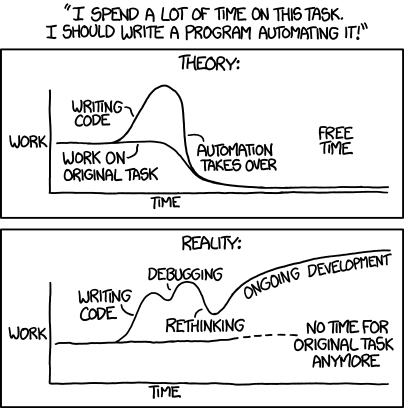

What Hackathons Are For

Hackathons aren’t about production code. They’re about asking “what if?”

What if contour hierarchies mapped to DOM trees? They do. What if you could draw a website and have it appear? You can. What if the weird idea could work? Sometimes.

The Right Idea, Wrong Time

The core insight (visual layouts should convert directly to code) was right. The industry spent eight years proving it.

We were just too early, with tools too primitive.

There’s something satisfying about that. Building something that anticipated where the field was going, even if we couldn’t get there ourselves.

The Code Is Still There

The repository is public. The contours still detect on clean drawings.

It’s not useful anymore. But it’s mine.

And somewhere in those nested loops and misspelled variables is proof that I once spent 48 hours turning hand-drawn boxes into HTML because I thought it would be cool.

It was.